Retriever

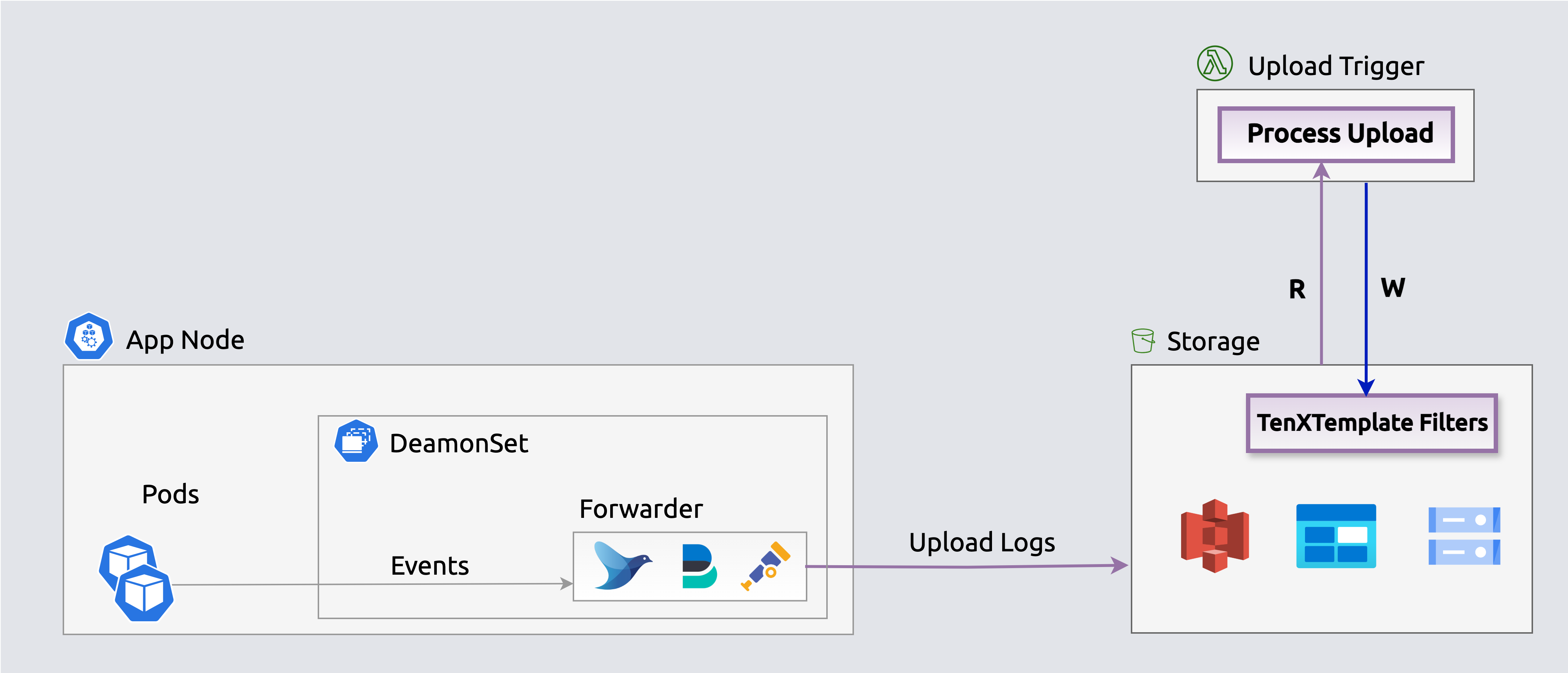

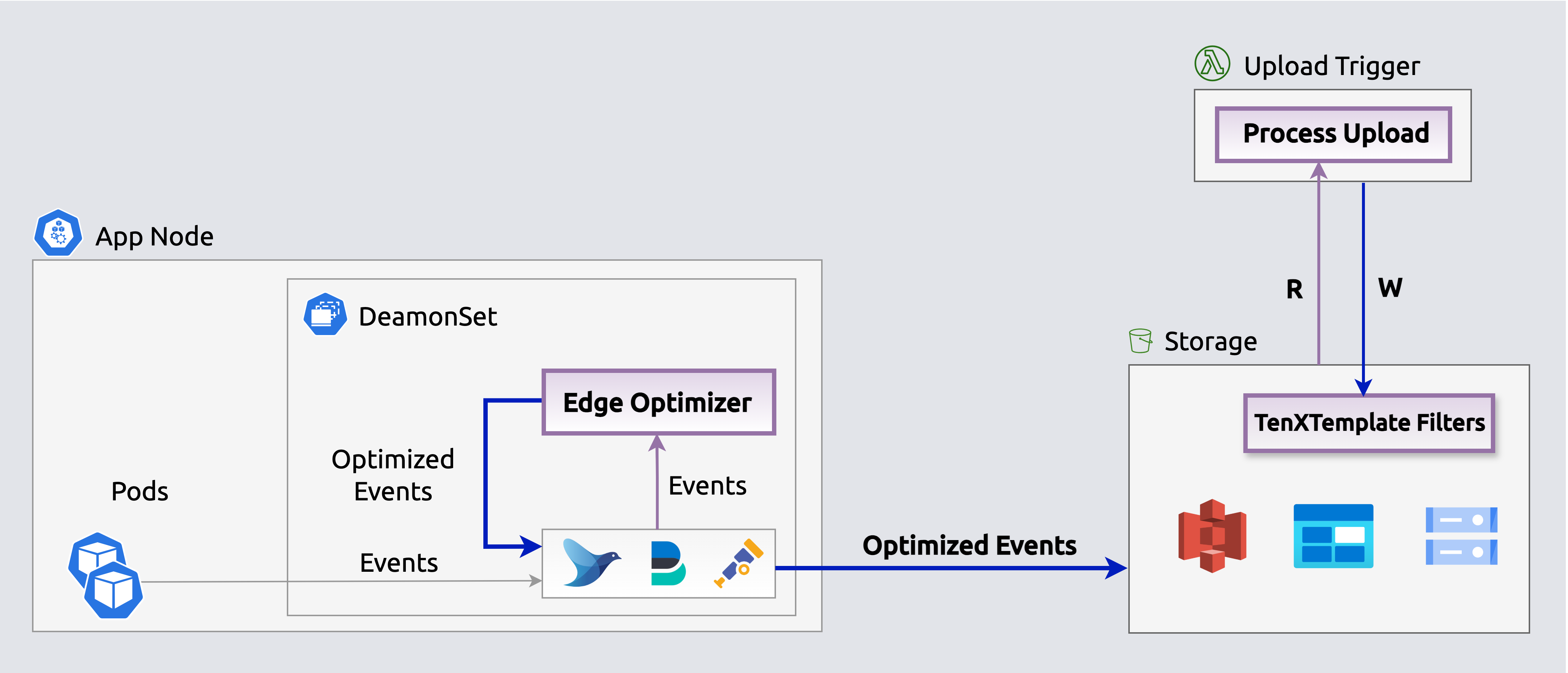

The Retriever indexes log/trace events in object storage (S3, Azure Blobs) and fetches them on demand past analyzer retention. Backfill a new metric retroactively, or replay an old incident from storage.

Workflow

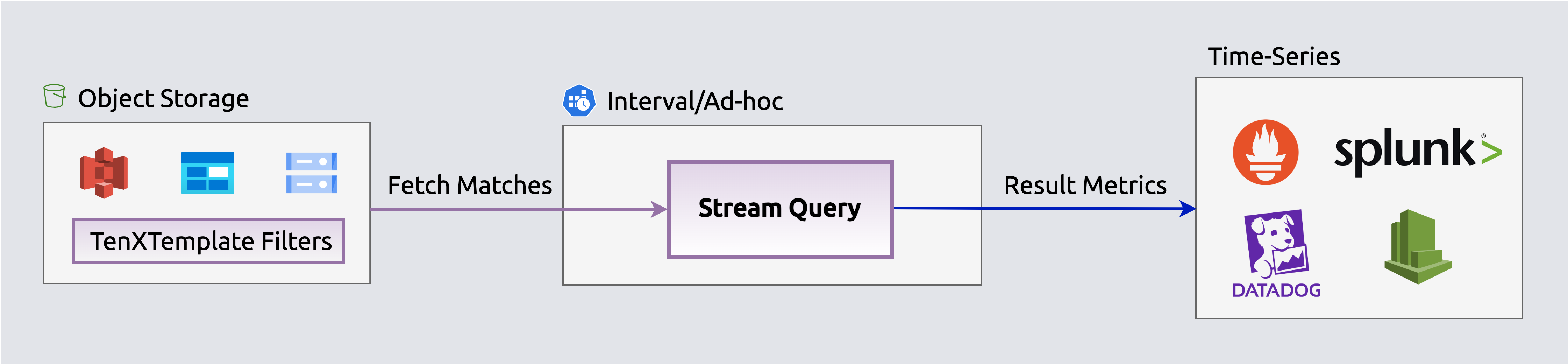

The app operates in two phases for efficient data handling: Index builds searchable filters when files upload to storage, and Query retrieves and streams matching events on-demand to log analyzers and dashboards.

Index

The Index phase executes when log files upload to storage (e.g., S3 bucket) to enable in-place querying.

graph LR

A["<div style='font-size: 14px;'>⚡ Trigger</div><div style='font-size: 10px; text-align: center;'>File Upload</div>"] --> B["<div style='font-size: 14px;'>📡 Receive</div><div style='font-size: 10px; text-align: center;'>Read Events</div>"]

B --> C["<div style='font-size: 14px;'>🔄 Transform</div><div style='font-size: 10px; text-align: center;'>Parse & Structure</div>"]

C --> D["<div style='font-size: 14px;'>🎁 Enrich</div><div style='font-size: 10px; text-align: center;'>Add Context</div>"]

D --> E["<div style='font-size: 14px;'>📝 Write</div><div style='font-size: 10px; text-align: center;'>Search Indexes</div>"]

classDef trigger fill:#7c3aed88,stroke:#6d28d9,color:#ffffff,stroke-width:2px,rx:8,ry:8

classDef receive fill:#9333ea88,stroke:#7c3aed,color:#ffffff,stroke-width:2px,rx:8,ry:8

classDef transform fill:#2563eb88,stroke:#1d4ed8,color:#ffffff,stroke-width:2px,rx:8,ry:8

classDef enrich fill:#059669,stroke:#047857,color:#ffffff,stroke-width:2px,rx:8,ry:8

classDef write fill:#ea580c88,stroke:#c2410c,color:#ffffff,stroke-width:2px,rx:8,ry:8

class A trigger

class B receive

class C transform

class D enrich

class E write⚡ Trigger: File upload events to object storage send notifications directly to the Index SQS queue for asynchronous processing by index workers

📡 Receive: Read log events from the uploaded file in object storage (S3, Azure Blobs)

🔄 Transform: Structured log events into typed objects with typed fields (severity, timestamp, source, message)

🎁 Enrich: Add context via enrichment rules — geo-IP location, severity classification, k8s metadata, lookup tables

📝 Write: Generate lightweight search indexes that map keywords and fields to specific files — enabling queries to skip 99%+ of files

Architecture

S3 uploads send event notifications to an SQS queue, triggering index workers to generate lightweight search indexes that map keywords and fields to specific files — enabling queries to skip 99%+ of files.

Search indexes enable querying events in-place

Search indexes enable querying events in-placeThe Edge-optimizer app reduces storage costs by over 50% by losslessly compacting events before they upload. Stream queries expand events on-the-fly for processing and streaming.

Reducer (Compact mode)s losslessly compact events before they upload.

Reducer (Compact mode)s losslessly compact events before they upload.Query

Execute queries periodically (e.g., k8s CronJob) or on-demand via the Console to populate log analytics dashboards and alerts (e.g., Splunk, Datadog) with selected events.

graph LR

A["<div style='font-size: 14px;'>⏰ Trigger</div><div style='font-size: 10px; text-align: center;'>Cron/API Call</div>"] --> B["<div style='font-size: 14px;'>📥 Query</div><div style='font-size: 10px; text-align: center;'>Filter & Fetch Events</div>"]

B --> C["<div style='font-size: 14px;'>🔄 Transform</div><div style='font-size: 10px; text-align: center;'>Parse & Structure</div>"]

C --> D["<div style='font-size: 14px;'>🎁 Enrich</div><div style='font-size: 10px; text-align: center;'>Add Context</div>"]

D --> E["<div style='font-size: 14px;'>🚦 Regulate</div><div style='font-size: 10px; text-align: center;'>Filter Events</div>"]

E --> F["<div style='font-size: 14px;'>📤 Stream</div><div style='font-size: 10px; text-align: center;'>Send to Targets</div>"]

classDef trigger fill:#7c3aed88,stroke:#6d28d9,color:#ffffff,stroke-width:2px,rx:8,ry:8

classDef query fill:#2563eb88,stroke:#1d4ed8,color:#ffffff,stroke-width:2px,rx:8,ry:8

classDef transform fill:#059669,stroke:#047857,color:#ffffff,stroke-width:2px,rx:8,ry:8

classDef enrich fill:#ea580c88,stroke:#c2410c,color:#ffffff,stroke-width:2px,rx:8,ry:8

classDef regulate fill:#dc2626,stroke:#b91c1c,color:#ffffff,stroke-width:2px,rx:8,ry:8

classDef stream fill:#16a34a88,stroke:#15803d,color:#ffffff,stroke-width:2px,rx:8,ry:8

class A trigger

class B query

class C transform

class D enrich

class E regulate

class F stream⏰ Trigger: Queries initiated via scheduled CronJobs or Console calls

📥 Query: Identify and retrieve relevant events from storage by app, timeframe, keywords, or custom criteria

🔄 Transform: Parse fetched events into typed objects with typed fields

🎁 Enrich: Add context via enrichment rules — geo-IP, severity, k8s metadata

🚦 Regulate: Cost-aware sampling before streaming — severity-boosted retention (ERRORs always kept, DEBUG throttled), per-hour budget caps, and per-event-type share limits. Controls exactly what reaches your analyzer and what it costs

📤 Stream: Output regulated events to log analyzers (Splunk, Elastic, Datadog) via Fluent Bit, or aggregate into metrics and publish to time-series DBs (Datadog, Prometheus). Horizontal scaling ensures consistent fetch times

Architecture

Enrich, regulate, and stream selected events to log analyzers (e.g., Elastic, Splunk, Datadog) via an embedded Fluent Bit output to populate dashboards, queries, and alerts. The rate reducer applies cost-aware sampling before streaming — severity-boosted retention (ERRORs always forwarded, DEBUG throttled) with per-hour budget enforcement.

Stream selected events from storage to log analyzers

Stream selected events from storage to log analyzersEnrich, regulate, aggregate and publish events on-the-fly as metrics to time-series outputs (e.g., Datadog, Prometheus) to populate dashboards, queries and alerts. The rate reducer applies cost-aware sampling (severity-boosted, budget-capped) before aggregation, ensuring only high-value events contribute to metrics. Aggregated metrics are published directly to Datadog's metrics API, Prometheus, or other time-series endpoints.

Stream aggregated events as metrics to time-series DBs

Stream aggregated events as metrics to time-series DBsInfrastructure & Security

Retriever runs entirely within your own AWS account — no log data leaves your infrastructure.

| Topic | Detail |

|---|---|

| Runs in your account | Kubernetes or Lambda deployment under your control |

| No automatic data access | You control which events to query and stream |

| Data stays in your S3 bucket | Index and queries operate only on your S3 files |

| Works on AWS | Optional Azure Blob Storage support on the roadmap |

| Kubernetes Secrets | Credentials never stored in config files |

See the Retriever FAQ for complete details on deployment, data access, and security guarantees.

This app is defined in retriever/app.yaml.